In February of this year, as communities and schools in New Jersey were awaiting the arrival of PARCC testing, I wrote this opinion piece for the Bergen County Record. In it, I said:

What can be expected? If experiences of other states that have already implemented PARCC- and CCSS-aligned exams are illustrative, New Jersey’s teachers, students and parents can expect steep declines in the percentage of students scoring in the higher levels of achievement. Neighboring New York, for example, has its own Pearson-designed CCSS-aligned exam, and the percentage of students scoring proficient or highly proficient was cut essentially in half to roughly 35 percent for both math and English….

….There is no reason to believe that 11th-graders today are any less skilled than their peers who took the HSPA last year or who took the NAEP in 2013, but there are plenty of reasons to believe that a drop in scores on PARCC will be exploited for political purposes.

It is a terrible burden being proven correct so often.

The New Jersey DOE released its report on the statewide results on PARCC this week, and immediately their meaning was thoroughly misrepresented by the media and by state Commissioner David Hespe. Writing for NJ.com, Adam Clark said that the results mean “The majority of New Jersey students in grades 3 through 11 failed to meet grade-level expectations on controversial math and English tests the state says provide the most accurate measurement of student performance yet.” In the same article, Commissioner Hespe is cited as saying:

Overall, the results show that high school graduation requirements are not rigorous enough for most students to be successful after graduation, state Education Commissioner David Hespe said. The 2014-15 results set a new baseline for improving student achievement, he said.

“There is still much work to be done in ensuring all of our students are fully prepared for the 21st century demands of college and career,” Hespe said.

Neither claim is remotely based on a factual representation of what these test scores mean. As my colleague Dr. Chris Tienken noted:

https://twitter.com/ChrisTienken/status/656907630825353217

To begin with, the statement that the majority of students “failed to meet grade level expectations” is entirely dependent upon the “meets expectations” and “exceeds expectations” being a proper representation of “grade level” work for each year tested. There is no basis for making this determination. PARCC has not provided research to bolster that claim, and, more importantly, we know that reading passages in the exam were specifically several grade levels above what can be developmentally expected of different aged readers. Russ Walsh of Rider University analyzed sample PARCC reading passages that were available in February of this year, and he found that using most agreed upon methods of determining readability that they were inappropriate for use in testing. There is no justification for such choices in test design unless the test makers want to push the cut scores for meeting and exceeding expectations well above what the median student is even capable of developmentally. It is therefore entirely unjustifiable to call these examination results proof that our students are not doing their work “at grade level,” and honestly, it is getting damned tiring to have to repeat that endlessly.

Commissioner Hespe’s comments were no more helpful, and certainly were not based in facts. The Commissioner repeated the often heard claims that the PARCC exams represent a more appropriate set of skills to demonstrate that our students are “ready” for the 21st Century and to measure their “college and career readiness,” but the justifications for those claims have never been subjected to public scrutiny. While the language of “college and career readiness” is slathered all over the Common Core State Standards and the aligned examinations written by PARCC and SBAC, repeating a slogan is a marketing tool rather than research validation. Five years after the standards were rammed through into 43 states and the District of Columbia, we are no closer to understanding the validity of the claim that the standards embody “college and career readiness” nor are we closer to knowing that the examinations can sort out who is or is not “ready.”

Further, the Commissioner’s claim that the test results “prove” that New Jersey high school graduation requirements are “not rigorous enough for most students to be successful after graduation” rests on two unproven contentions: 1) that PARCC actually is sorting those who are “ready” for college and careers from those who are not and 2) students who do not score “at expectations” or above can blame any lack of success they have later in life on their primary and secondary education rather than on macroeconomic forces that have systematically hollowed out opportunity.

Let’s consider the first part of that claim. PARCC claims that its Pearson written exam is a “next generation” assessment that really requires students to think rather than to respond, but does it actually achieve that end? Julie Campbell of Dobbs Ferry, New York, has had experience with students taking the New York common core aligned examinations which are also written by Pearson, and while she is supportive of the Common Core Standards, she is highly critical of the caliber of “thinking” the exams require:

The four-point extended response question is troubling in and of itself because it instructs students to: explain how Zac Sunderland from “The Young Man and the Sea” demonstrates the ideas described in “How to be a Smart Risk-Taker.” After reading both passages, one might find it difficult to argue that Zac Sunderland demonstrates the ideas found in “How to be a Smart Risk-Taker” because sailing solo around the world as a teenager is a pretty outrageous risk! But the question does not allow students to evaluate Zac as a risk taker and decide whether he demonstrates the ideas in the risk taker passage. Such a question, in fact, could be a good critical thinking exercise in line with the Common Core standards! Rather students are essentially given a thesis that they must defend: they MUST prove that Zac demonstrates competency in his risk/reward analysis.

So one can hardly be surprised to find an answer like this:

One idea described in “How to be a Smart Risk-taker” is evaluating risks. It is smart to take a risk only when the potential upside outweighs the potential downside. Zac took the risk because the downside “dying” was outweighed by the upside (adventure, experience, record, and showing that young people can do way more than expected from them). (pg 87)

Do you find this to be a valid claim? Is the downside of “dying” really outweighed by the upside, “adventure”? Is this example indicative of Zac Sunderland being a “Smart Risk Taker”? I think most reasonable people would argue against this notion and surmise that the student has a flawed understanding of risk/reward based on the passage. According to Pearson and New York State, however, this response is exemplary. It gets a 4.

There may not be “one right answer” in an examination like this, but what might be actually worse is that students can be actively coached to submit “plug and play” answers which mimic a style of thinking but which have no depth and, worse, can be nonsensical just so long as they hit the correct rubric markers.

We should also question Commissioner Hespe’s contention that these exams are showing us anything new about our high school graduates and students in general. They most decidedly are not. Again, the New York experience is illustrative. Jersey Jazzman does an outstanding job demonstrating that in New York State, even as proficiency levels tumbled off the proverbial cliff, the actual distribution of scale scores on the different exams barely moved at all. The reason is simple: once raw scores are converted into scale scores on a standardized exam, they, by design, reflect a normal distribution of scores, and it does not matter if the exam is “harder” or not — the distribution of scaled scores will continue to represent a bell curve, and once the previous scores and current scores are represented by a scatter plot, 85% of the new scores are explained by the old scores. In other words: the “new” and “better” tests were not actually saying anything that was not known by the older tests. The decision to set proficiency levels so that many fewer students are “meeting expectations” is a choice that is completely unrelated to the distribution of scores on the tests.

So let’s check if we really are concerned that New Jersey students are graduating not “ready for college and careers.” Here are the statewide scores on PARCC according to the DOE release:

So this means, in the language of PARCC, that “only” 41% of New Jersey 11th graders are “on track” to be “college and career ready” in English, and “only” 36% of Algebra students are similarly situated (Again, remember that score distributions are likely almost entirely unchanged from the previous state assessments – this is about how high the cut scores are set). Oddly enough, the DOE pretty much admits that we did not need PARCC to demonstrate this to us because New Jersey participates in the National Assessment of Educational Progress testing every several years, and, wouldn’t you know it, NAEP and PARCC results are not perfectly aligned, but they come pretty darned close (as do SAT and ACT scores):

The high school reading and algebra proficiency levels are almost entirely identical comparing PARCC to NAEP. Dr. Diane Ravitch of New York University sat on the NAEP Board of Governors and has repeatedly explained that both the “advanced” and “proficient” levels in NAEP represent very high level work at the “A” level for secondary students. So not only have the PARCC scores told us things about our students in NJ that we already knew from NAEP, but also it reaffirms the NAEP findings that over 40% of New Jersey high school seniors are capable of A level work in English and over a third of those students are capable of A level work in Algebra.

If the goal is to have all of our students “college and career ready” by reading and doing algebra at the “meets” and “exceeds expectations” level on a test roughly correlated to NAEP levels indicating A level achievement, then we might as well shut down shop right now because our schools will always fail. Moreover, we should vigorously question the implication that any student getting respectful if not outstanding grades in core subjects is doomed to failure, and we should certainly question a goal of “college and career readiness” that appears entirely limited to “ready for admission to a 4 year selective college.” The nonsensical approach of using cut scores to identify the percentage of students likely to seek a 4 year degree and labeling them our only students who are “ready” is based more on a desire to label more schools and students as failures than any other consideration.

The reality is that there are crises relating to education and opportunity both in New Jersey and in the country as a whole. The first crisis is related to the distribution of opportunity via our education system. I can walk a few miles from the campus where I teach and find a community where over 70% of the adults over the age of 25 have a college degree, and I can walk a few miles in the exact opposite direction and find a community where that is only 12% of the population. That is unacceptable and needs to change; it is also something that we knew full well before the PARCC examinations came along, and which we will not address by berating test scores while ignoring the importance of fair and equitable school funding.

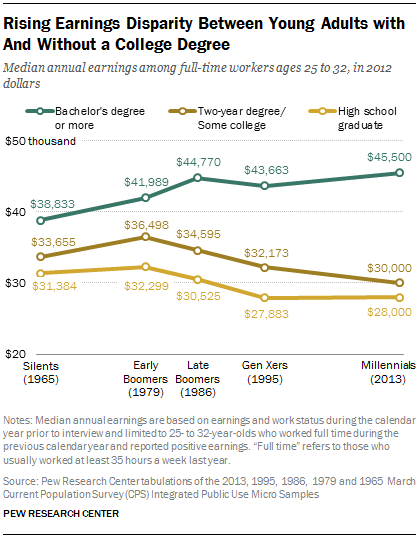

The second crisis is in our economy and the simple fact that our economy has shown no signs of actually needing more people with bachelors degrees. Since 1986, the dollar adjusted wages for people with a BA in the country have grown only by $700, but the college wage premium has grown largely because of the collapse of wages for people without those degrees:

Far from needing many more college graduates, which would push wages even further down, we need an economy where people who work full time without a degree can survive well above subsistence level and closer to their college educated peers as they used to before 1980. Unless Commissioner Hespe and his fellow PARCC supporters are arguing that college really is the new high school – in which case they had better get to work right away finding a way to make it free for everyone because we cannot possibly survive an economic system that both requires everyone to have a specific degree and requires them to accumulate crushing debt in pursuit of it.

(Just a side observation: remember when PARCC promised that their “next generation assessments” would “help teachers know where to strengthen their instruction and let parents know how their children are doing”? It is now about half a year later, and those students have been in their NEW teachers’ classrooms for almost 2 full months now. It is far too late for teachers to even use the score reports to make adjustments in their curricula that they were developing all summer long without the PARCC results. If the goal of the assessments was to give teachers actionable data in anything remotely resembling real time, they are a crashing, embarrassing failure, and given the testing schedule in late Spring, they are likely to remain so.)

Reblogged this on David R. Taylor-Thoughts on Education.

Reblogged this on Saving school math and commented:

As a former teacher of statistics I have to pass this on.

Pingback: Chris Christie Calls Mandatory Recess Bill “Stupid” | Daniel Katz, Ph.D.

Pingback: The Truthiness of Campbell Brown | Daniel Katz, Ph.D.

Pingback: New Jersey <3's PARCC | Daniel Katz, Ph.D.